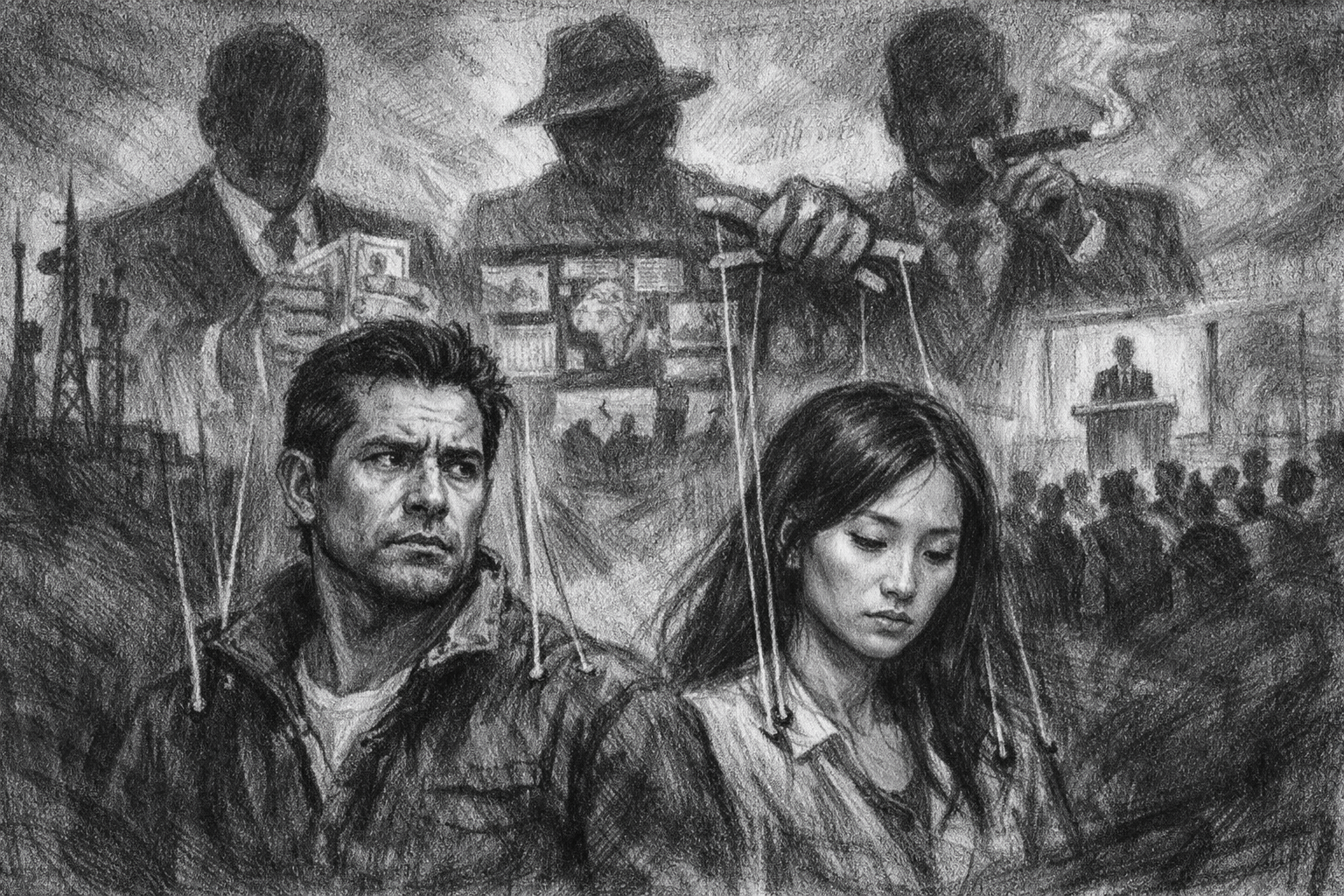

We live in an age of technological brilliance and moral confusion. War is live-streamed. Artificial intelligence can profile us before we speak. Wealth compounds at speeds no prior civilization has witnessed while millions remain economically brittle. And everyone, somehow, claims moral authority.

So instead of writing another opinion piece from a single vantage point, I decided to host a roundtable discussion. A structured moral collision with four thinkers who do not agree on much. I gave them three of the most contested moral questions of our time and told them nothing was off the table.

At the table: Dr. Miriam Hale, a religious ethicist working from a Judeo-Christian framework. Professor Adrian Cole, a secular humanist philosopher. Dr. Lena Voss, a utilitarian policy theorist. And Attorney Marcus Reed, a rights-based constitutional scholar.

Their focus: three major societal developments that are widely considered morally troubling. First, civilian harm in ongoing armed conflicts. Second, AI surveillance and behavioral manipulation. Third, economic inequality in the age of automation.

I moderated. They did not hold back.

Opening Question: Are We in a Moral Crisis?

Canty: Before we tackle each issue, I want to ask something foundational. Are we in a uniquely immoral era, or are we just more aware of our failures?

Dr. Miriam Hale (Religious): We are not more immoral than ancient civilizations, but we are more arrogant. Human nature hasn’t changed. Violence, greed, domination are constants. What has changed is our power. We now possess technological capabilities that amplify sin at scale. When ancient kingdoms slaughtered civilians, they did it with swords. Now we annihilate cities remotely and call it “strategic containment.” The moral crisis is not new evil. It is scaled evil without repentance. What disturbs me most is not that people do wrong. It is that we have lost the language of moral accountability. We speak in terms of efficiency and geopolitics and rarely use words like guilt, responsibility, or moral injury. That absence is new.

Professor Adrian Cole (Secular Humanist): I wouldn’t frame it as sin. I’d frame it as institutional failure. We are not uniquely depraved. We are uniquely interconnected. Our systems, economic, technological, military, operate globally, but our moral imagination hasn’t kept pace. The crisis is structural. We have built systems that incentivize short-term gain over long-term human flourishing. Leaders optimize for elections. Corporations optimize for quarterly earnings. Social media optimizes for engagement. The problem isn’t that humans are evil. It’s that our systems reward behavior that consistently undermines human dignity.

Dr. Lena Voss (Utilitarian): I think both of you are being too abstract. The question is measurable: are people better off overall? On many metrics, life expectancy, extreme poverty reduction, medical advancement, humanity has improved dramatically. But we are entering a period where marginal gains are unevenly distributed and risks are escalating. AI and autonomous warfare introduce catastrophic downside scenarios. The moral question isn’t “Are we sinful?” It’s “Are we increasing or decreasing aggregate well-being?” Right now, we are playing with tools that could either massively improve human life or cause immense suffering. That is the inflection point.

Attorney Marcus Reed (Rights-Based): The moral crisis is simple: rights are eroding. War crimes are excused. Privacy is treated as obsolete. Property rights are distorted by crony capitalism. Free speech is selectively protected. You don’t need theology or a happiness calculus to see the issue. When individual rights are subordinated to “collective goals” or “national interest,” abuse reliably follows. The 20th century taught us that lesson in blood. We seem eager to forget it.

Topic One: Civilian Harm in Armed Conflicts

Canty: Ongoing wars continue to result in civilian casualties, displacement, and infrastructure collapse. Each side claims justification. Is modern warfare morally defensible in its current form?

Hale: War is sometimes necessary. That is the uncomfortable truth. The religious tradition I represent includes just war theory, and war can be morally justified under strict conditions. But what I see today is moral laziness. Proportionality is routinely violated. Civilian suffering is framed as collateral damage rather than moral tragedy. Entire populations are dehumanized to justify strategic goals. When leaders make decisions that foreseeably cause civilian death, they bear moral responsibility before God, whether or not international courts prosecute them. We have normalized distant killing. Drone strikes feel like video games. Moral distance reduces empathy. If you cannot look at the child killed in your operation and still defend your action before a moral law higher than your nation, you are not acting justly.

Cole: I agree with the emotional core, though not the theological framing. The problem is dehumanization. National narratives allow us to see “them” as abstractions. Civilians become statistics. We must center human rights and international law not as political tools but as universal commitments. Civilian immunity should not be a negotiable principle. However, there are tragic dilemmas. If one regime commits aggression and embeds military infrastructure in civilian areas, defenders face horrific trade-offs. The moral failure is not only in the battlefield decision. It is in the geopolitical conditions that allowed the conflict to escalate in the first place.

Voss: Let’s be honest: in some cases, civilian harm in war may reduce total long-term suffering. If ending a conflict quickly requires aggressive force that results in fewer total deaths compared to a prolonged war, a utilitarian framework may justify it. That sounds cold, but moral decision-making in war is about minimizing overall harm, not preserving moral purity. The key question is evidence. Are leaders actually calculating long-term harm, or are they rationalizing violence for political advantage? If civilian harm is strategically ineffective or escalatory, then it is both immoral and foolish.

Reed: This is exactly why utilitarian reasoning is dangerous. Once you start weighing civilian lives against hypothetical future outcomes, you open the door to atrocity. Individual rights are not bargaining chips. A civilian has a right not to be intentionally targeted. Period. If military strategy relies on violating that right, the strategy is unjust, even if someone can construct a model predicting long-term benefit. The erosion of rights during war becomes the precedent for erosion during peace. Governments rarely relinquish power voluntarily after emergencies.

Voss: So if refraining from a decisive strike leads to prolonged war and tens of thousands more deaths, you’d still prioritize strict rights adherence?

Reed: Yes. Because once you permit intentional violation of innocent rights, you destroy the moral foundation that distinguishes defense from aggression.

Hale: There is a bridge here. Just war theory does not permit intentional targeting of civilians. It does recognize tragic side effects, but only when the intention is morally ordered and proportional. Intent matters.

Cole: Intent matters, but so do systems. We need stronger international enforcement mechanisms. Otherwise, moral language is just rhetoric without consequence.

Topic Two: AI Surveillance and Behavioral Manipulation

Canty: Governments and corporations are using data, facial recognition, and predictive algorithms at massive scale. Supporters argue it improves safety and efficiency. Critics argue it erodes privacy and autonomy. Moral line or moral panic?

Reed: This is the clearest moral violation of our time. Mass surveillance without explicit consent is an assault on individual liberty. Predictive policing systems treat people as statistical probabilities rather than rights-bearing individuals. Social credit systems, whether state-run or corporate, condition access to opportunity based on behavioral conformity. Privacy is not nostalgia. It is a prerequisite for freedom of thought. If every action is monitored, individuals self-censor. That chills dissent. A society without dissent drifts toward authoritarianism. We are sleepwalking into digital control structures.

Voss: AI surveillance has prevented crimes, optimized resource allocation, and improved healthcare diagnostics. If data collection reduces violent crime or predicts infrastructure failures, the aggregate benefit could be enormous. The moral question isn’t whether surveillance exists. It’s how it’s governed. We need guardrails, transparency, and oversight. Abandoning high-impact tools because of theoretical abuse is not a principled position. It is irrational.

Hale: I find this deeply troubling, and not only because of political liberty. There is something spiritually corrosive about constant monitoring. Human beings require interior space, a realm where conscience forms without external coercion. If algorithms constantly nudge behavior, we risk engineering compliance rather than cultivating virtue. Virtue must be chosen freely. When corporations manipulate attention to maximize engagement, they exploit weakness rather than strengthen character. That is not morally neutral innovation. It is commodified temptation.

Cole: I agree that manipulation is the key issue. There is a real difference between using data to improve public health and using it to shape political beliefs or consumer behavior without awareness or consent. Autonomy is central to human dignity. Behavioral nudging that bypasses reflective consent undermines that autonomy. But let’s avoid romanticizing the pre-digital world. Governments have always surveilled. Corporations have always manipulated. The scale is new, but so is public scrutiny. The moral solution is democratic governance of AI, not technophobia.

Reed: Democratic governance fails when citizens are already being manipulated by the very systems they are supposed to regulate. If information feeds are algorithmically curated to shape perception, public consent becomes engineered. That is the real danger.

Voss: Or it becomes more efficient information delivery. Not every algorithm is propaganda.

Hale: But many are optimized for profit rather than truth. That distinction matters enormously.

Cole: That is the structural flaw. Profit-driven engagement incentives distort information ecosystems in ways that serve platforms, not people.

Topic Three: Economic Inequality in the Age of Automation

Canty: Automation and AI are generating enormous productivity gains, yet wealth concentration accelerates. Is extreme inequality morally wrong, or simply an outcome of innovation?

Voss: Inequality is not inherently immoral. What matters is whether the worst-off are improving in absolute terms. If automation increases overall wealth and raises baseline living standards, even if billionaires multiply, the net outcome could still be positive. The moral danger arises if automation creates structural unemployment without social adaptation. Policy must focus on maximizing total well-being: retraining programs, universal basic income experiments, redistribution mechanisms where necessary. But envy is not a moral argument.

Reed: Agreed on envy. If wealth is acquired through voluntary exchange and innovation, it is morally legitimate. The problem is not inequality itself. It is cronyism, regulatory capture, and corporate-state entanglement. When the state distorts markets in favor of entrenched players, opportunity shrinks. That is a rights violation. Protect property rights, enforce contracts, prevent coercion. The rest is not the government’s moral domain.

Cole: I think that framing is too narrow. Massive inequality erodes democratic equality. When economic power translates into political influence, formal rights remain on paper but substantive equality collapses in practice. A society where a small elite controls information infrastructure, capital flows, and lobbying power risks undermining equal citizenship entirely. Human dignity includes the capacity to meaningfully participate in civic life. Extreme inequality corrodes that capacity over time.

Hale: Scripture does not condemn wealth. It condemns indifference. When technological systems displace workers while executives receive exponential compensation and communities collapse, that is not neutral market efficiency. That is moral failure. A society must ask not only “Is it legal?” but “Is it loving?” Do we design economic systems to maximize profit, or to cultivate human flourishing? Work is not only income. It is dignity, purpose, and participation. Automation that strips meaning without restoring it creates spiritual impoverishment that numbers in a GDP report will never capture.

Voss: But resisting automation would freeze progress and harm future generations.

Hale: I am not advocating resistance. I am advocating responsibility.

Reed: Responsibility should be voluntary, not mandated through coercive redistribution.

Cole: Yet voluntary responsibility has not corrected structural inequality. Decades of evidence make that clear.

Final Question: Which Issue Is Most Morally Urgent?

Canty: If you had to prioritize, which of these three poses the greatest moral threat?

Hale: AI manipulation. War is ancient. Inequality is recurring. But systematic engineering of human behavior through technology could reshape the moral agency of entire generations. If we lose the capacity for free moral choice, we lose the soul of civilization.

Cole: I would say inequality intertwined with technological control. When economic and informational power concentrate together, democracy weakens over time. The long-term risk is oligarchic drift that most people won’t notice until it’s too late to reverse.

Voss: Autonomous weapons combined with AI escalation risks. A miscalculation amplified by machine-speed decision systems could cause catastrophic loss of life. The scale of potential harm makes it the most urgent problem on this list.

Reed: Surveillance infrastructure. Once normalized, it becomes nearly impossible to dismantle. It reshapes the relationship between citizen and state permanently. Freedom erodes quietly, not explosively. That is what makes it so dangerous.

Closing Reflection

As moderator, I expected disagreement. What surprised me was convergence. All four voices, despite radically different moral foundations, expressed deep concern about the same cluster of dangers: dehumanization, concentrated power, loss of autonomy, and moral shortcuts justified by efficiency.

The religious voice warned of sin amplified by technology. The humanist warned of systems misaligned with dignity. The utilitarian warned of catastrophic risk and measurable suffering. The rights-based scholar warned of liberty quietly dissolving.

They did not agree on solutions. They did not agree on ultimate foundations. But they agreed on this: we are building systems faster than we are building moral consensus about how to govern them. And that may be the most morally questionable fact of all. And that may be the most morally questionable event of all.

Ronnie Canty | The Canty Effect